How Cutting-Edge Archaeology Can Improve Public Health

Please note that this article includes an image of human remains.

Twenty years ago, I held in my hands the leg bones of a 2-year-old child who had died in Birmingham in the early 1800s. They were bowed and felt too lightweight, almost like cardboard. As a biological anthropologist at Birmingham University in England, I had taken on the job of overseeing the human remains excavated from St. Martin’s church cemetery. Birmingham, a once-booming city that had been at the heart of the Industrial Revolution in Britain, had fallen on hard times as its metalworking and manufacturing industries died out in the last half of the 20th century. But regeneration of the downtown core was underway in the late 1990s, and the churchyard was being excavated to make room for redevelopment.

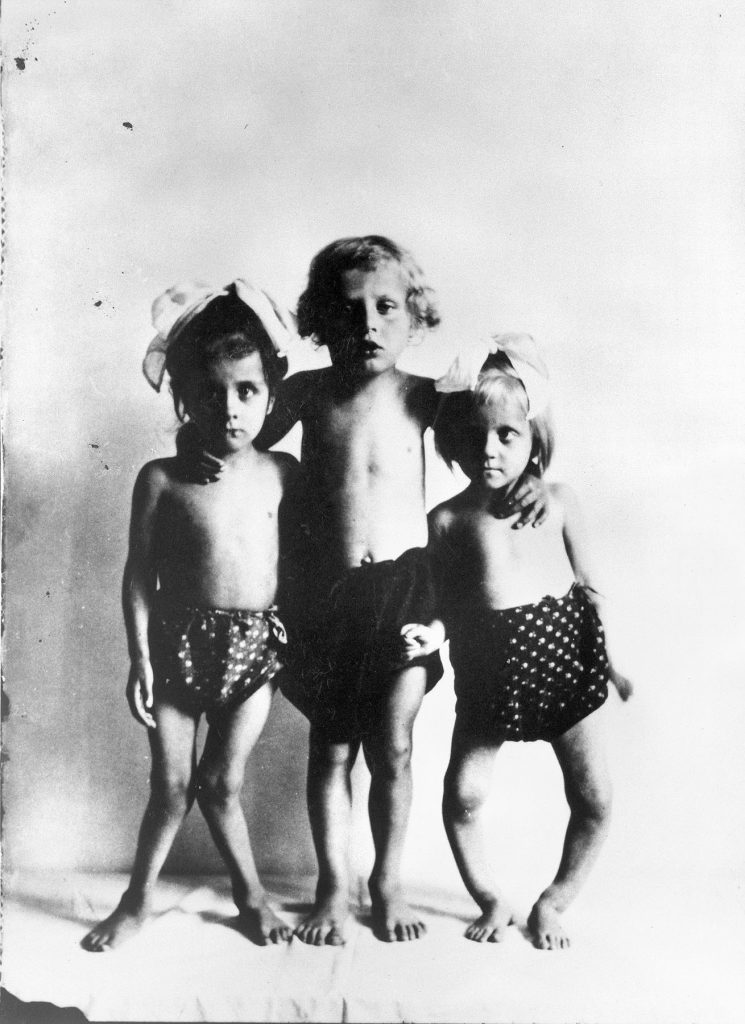

More than 12 percent of the 163 bones of infants and children found in this cemetery were deformed or lightweight—clear signs of rickets. A childhood disease primarily caused by vitamin D deficiency, rickets leads to a softening and distortion of the bones, which fail to mineralize properly. The signs were sobering. I put my head down and focused on my work, trying not to be overwhelmed by what I imagined to be the desperate conditions likely experienced by these victims of the industrial boom.

Today vitamin D and the consequences of its deficiency are big news. Without the vitamin, bones grow soft and weak, causing not only rickets but other bone disorders too. Vitamin D has even been linked to illnesses related to the immune system and to neoplastic diseases, including cancer. Most people with sufficient levels get the vitamin D they need from exposure to the sun’s ultraviolet rays. But those who live far from the sunny equator or in cloudy or smoggy locations, or who spend too much of their time indoors or under heavy clothing, need a vitamin boost.

At the time of the Industrial Revolution, when cities like Birmingham were cloaked in pollution, researchers had not yet unraveled these connections, making rickets an unfathomable plague. Only a few foods, including cod liver oil, are a natural source of the vitamin; in the 1920s and ’30s, vitamin D started to be added to foods like milk and infant supplements to tackle the problem.

Even so, vitamin D deficiency is still strikingly common: Worldwide estimates are difficult to capture, but a review of research from 46 countries showed up to one-third of populations studied had insufficient levels of vitamin D. These results accord with data from the Canadian government indicating that 32 percent of people have blood levels of the vitamin that are below what is advisable. In addition, a two-year study in Canada, published in 2007, turned up 104 confirmed cases of rickets in children—2.9 cases per 100,000. Though many people with milder deficiencies have no noticeable symptoms, they could be at risk of bone fractures or a weakened immune system.

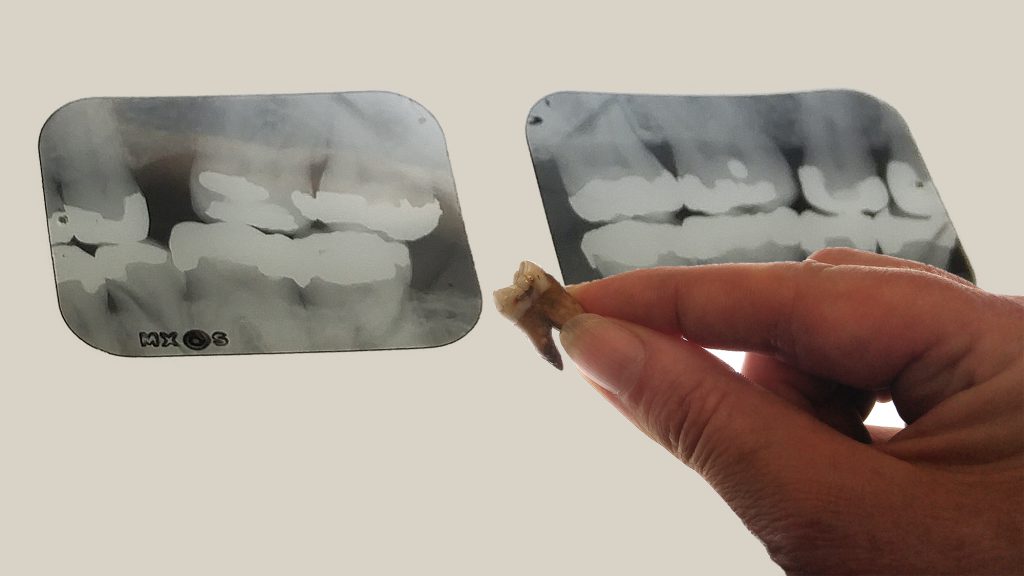

Our work—which started in that cemetery in Birmingham, then wound through ancient Rome, 19th-century Quebec City in Canada, and the late Pleistocene era in what is now the Middle East, when early humans were first stepping out of Africa—was spurred by trying to find more accurate, and less destructive (that is, not cutting teeth into pieces), methods for spotting vitamin D deficiency in ancient peoples. But in the end, it may have provided an unexpected benefit: a way to spot the deficiency in today’s children from simple dental X-rays.

The city of Birmingham is slightly farther north than Saskatoon, Canada—far from the sunny climes of the equator. By the late 18th century, Birmingham was already heavily built. Most people worked indoors, including children as young as 7 years of age. People covered themselves fully with clothing for modesty and to protect themselves from the frigid cold. And terrible pollution from coal burning produced clouds of thick, black smoke that often blocked the sun. It came as no surprise to us, therefore, that cases of rickets were widespread. We even found adults that had been deficient in vitamin D for so long that they too had developed skeletal changes. Other adults had bones that were misshapen from childhood cases of rickets.

Our work on these remains allowed us to develop a series of criteria that can be applied to archaeological skeletons to investigate vitamin D deficiency. These include a lack of mineralization in the fast-growing ends of the long bones in children and specific fractures that form in affected adults.

Working alongside a team of colleagues and students for five years, I applied these criteria to archaeological skeletons from across the Western Roman Empire—one of the first large-scale, complex social systems in Europe. (The empire thrived from the first to the fifth centuries; we also had remains from some individuals who were buried in the sixth century.) By the end of this project, we had analyzed 3,541 individuals from 15 settlements (not all of whom were able to be included in the newly published study), from southern Spain to slightly north of Birmingham, finding rickets in about 5 percent of the subadult bones and vitamin D deficiency across all ages.

As suspected, rickets was clearly correlated with how far a person lived from the equator. But I found it surprising that settlement size didn’t also play a role: I had assumed that people living in larger towns would have spent more time in the shade of tall buildings and less time in the fields. But it seems that very few Roman towns had multistory buildings, and in all but the biggest settlements, most people still grew some of their own food. The only exception we found was the Roman port town of Ostia, at the mouth of the Tiber River in Italy, which was probably densely settled with multistory buildings and had a higher level of rickets than one might expect for its latitude.

Around this same time, in 2013, I also started working with one of my graduate students on other ways of accurately assessing vitamin D deficiency: We pieced together evidence to make a case for defects in the formation of dentin in teeth. The defects, called incremental interglobular dentin (IIGD), looked like little black halos or bubbles within the tooth. Because teeth grow in layers like annual rings on many trees, we could tell a person’s approximate age at the time their deficiency occurred.

We began by analyzing the dentition in skeletons with very clear evidence of past rickets, from sites in northern France and Quebec City, alongside the teeth and X-rays of living individuals. Some of the archaeological individuals had striking recurring episodes: One man from St. Matthew’s cemetery in Quebec had experienced four periods of severe vitamin D deficiency before he died at around 23 years of age.

Then we learned that in the 1950s, Reidar Sognnaes, a faculty member at the Harvard School of Dental Medicine, had cut open teeth from a wide variety of samples that today wouldn’t be handed over for such destructive analysis: teeth from humans who lived during the late Pleistocene, including some over 100,000 years old from Middle Eastern sites, and a series of Greek teeth from 3000 B.C. up to the 1940s. At least one of the teeth dating to the late Pleistocene had clear IIGD, and the Greek teeth showed a marked rise in the amount and severity of IIGD over time; some of their owners likely had rickets.

It turns out that teeth held many clues that would lead us into a whole new level of inquiry. By cutting teeth open, as Sognnaes had done, we can determine the level of deficiency along with the age at which it occurred. Yet we needed to find ways to analyze teeth that did not harm or destroy them. (Archaeological human remains are a precious resource that cannot be replaced.)

We discovered that the period of formation of the pulp chamber of molar teeth is critical: If more than just a slight vitamin D deficiency occurs at the time of formation (molars usually grow in children between 1 and 12 years of age), the shape of the pulp chamber changes in a way that can be spotted on dental X-rays. By working with a dentist whose patients generously donated their extracted teeth, we found about 12 of 25 people showed vitamin D deficiency while their molars were forming. (They didn’t have any obvious symptoms and couldn’t do anything about their previous deficiencies at the time of our analysis.) The same X-ray technique also worked on 25 archaeological teeth.

This dental X-ray approach is still in its early days: We need to do further studies with more teeth to firm up the results, and we are looking at early, temporary teeth (“milk teeth”) from children too, to see if we can observe deficiencies in even younger children. (We have some milk teeth from a 19th-century site in Spain that show their owners suffered from the worst cases of rickets I have ever seen, as confirmed by extreme bowing deformities and fractures in their bones.) If it holds up to further testing, this technique could offer researchers a way to harmlessly assess large numbers of teeth from museum collections, helping to map out where, when, and why vitamin D deficiency occurred in the past.

This technique could have medical applications too. Most adults get dental X-rays every few years, as do some children. A quick check of those X-rays for vitamin D deficiency could help to identify suspect cases to refer for blood tests. Ideally, dentists could spot deficiencies while they are occurring—and before they become serious.

Working with the remains of British children who likely died as a result of unhealthy urban environments during the Industrial Revolution saddened and frustrated me. Little did I know at the time that my colleagues and I would develop techniques that shine a light on aspects of these children’s lives and the experiences of others who are often lost in a distant past. And in understanding their stories, we discovered a way to contribute to a current health issue. Maybe the past doesn’t have to repeat itself.